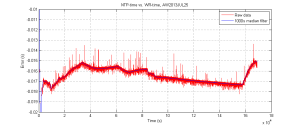

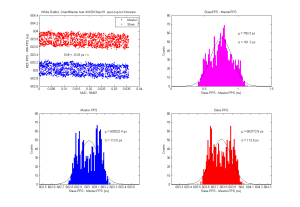

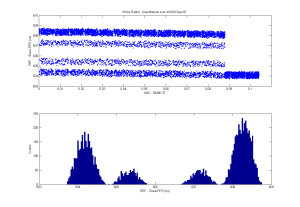

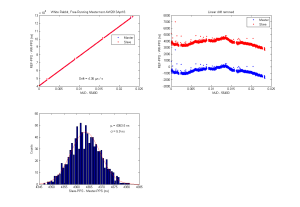

Here's a plot of the time error between the standard unix system-time, kept on time using NTP, and a much more accurate PTP-server based on White Rabbit that runs on a fancy FPGA-based network-card.

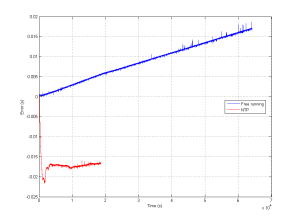

Note that without NTP a typical computer clock will be off by 10 ppm (parts-per-million) or more. This particular one measured about 40 ppm error in free-running mode (no NTP). That means during the duration of this 16e4 s measurement we'd be off by about 640 milliseconds (way off the chart) without NTP. With NTP the error seems to stay within 3 milliseconds or so. The offset of -16 milliseconds is not that accurately measured and could be caused by a number of things.